Software for AI on Ubuntu

Software for AI on UbuntuArtificial Intelligence (AI) continues to be one of the fastest-growing fields in science and technology, solving challenges across industries like healthcare, education, banking, manufacturing, security, and more.

Linux, particularly Ubuntu, remains a popular choice for AI development due to its compatibility with virtually any programming language and robust support for various AI platforms. To help you keep up with the latest tools, we’ve updated our list with five open-source AI tools for Ubuntu that are making waves in 2025.

Best Ai tools on Ubuntu in 2025

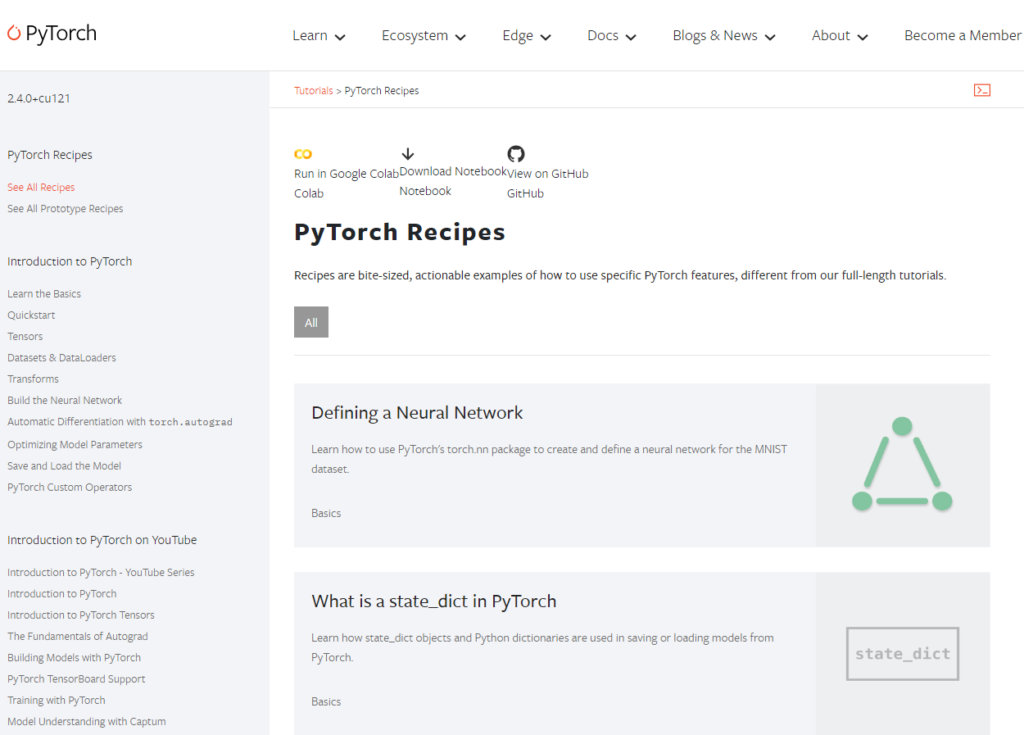

#1. PyTorch

PyTorch is a popular open-source machine learning framework developed by Facebook’s AI Research lab and right now, easily the top tool I would personally recommend you all try first. It is well built, well supported and with the way Python is taking over the world, it’s a no brainer for you to at least know what it can do.

PyTorch is a popular open-source machine learning framework developed by Facebook’s AI Research lab and right now, easily the top tool I would personally recommend you all try first. It is well built, well supported and with the way Python is taking over the world, it’s a no brainer for you to at least know what it can do.

PyTorch is known for its dynamic computation graphs, which make it highly flexible and user-friendly, especially for research and development. PyTorch is widely used for deep learning applications such as computer vision and natural language processing, and it supports training on GPUs, making it an essential tool for modern AI development.

Why you might like it:

- Dynamic computation graphs for flexibility and ease of debugging.

- Support for CUDA-capable GPUs for accelerated training.

- Extensive ecosystem including TorchVision, TorchText, and more for various AI tasks.

- Seamless integration with Python and robust community support.

- Widely used in research and production for deep learning, computer vision, and NLP.

#2. TensorFlow

TensorFlow is a powerful open-source platform for machine learning developed by Google. It has been streamlined to be more user-friendly, making AI model building more accessible to developers of all skill levels. TensorFlow 2 integrates with Keras, providing a simple and flexible interface for building and deploying deep learning models across various platforms, including cloud, mobile, and web applications.

TensorFlow is a powerful open-source platform for machine learning developed by Google. It has been streamlined to be more user-friendly, making AI model building more accessible to developers of all skill levels. TensorFlow 2 integrates with Keras, providing a simple and flexible interface for building and deploying deep learning models across various platforms, including cloud, mobile, and web applications.

Why you might like it:

- User-friendly APIs with eager execution for easy debugging and model building.

- Integration with Keras for quick prototyping and model customization.

- Supports multiple platforms including desktop, mobile, and web.

- Powerful tools like TensorBoard for visualization and TensorFlow Extended (TFX) for model deployment.

- High performance on CPUs, GPUs, and TPUs.

#3. Hugging Face Transformers

Hugging Face Transformers is an open-source library that provides state-of-the-art pre-trained models for natural language processing tasks. It simplifies the process of integrating models like BERT, GPT, and T5 into applications, enabling developers to perform tasks such as text classification, question answering, and language generation. Hugging Face has become a leading resource for NLP, making cutting-edge AI technology accessible and easy to implement.

Why you make like it:

- Access to thousands of pre-trained models for NLP tasks.

- Supports a wide range of architectures including BERT, GPT, and T5.

- Seamless integration with PyTorch, TensorFlow, and JAX.

- Easy deployment on various platforms including cloud, mobile, and web.

- Extensive documentation and active community support.

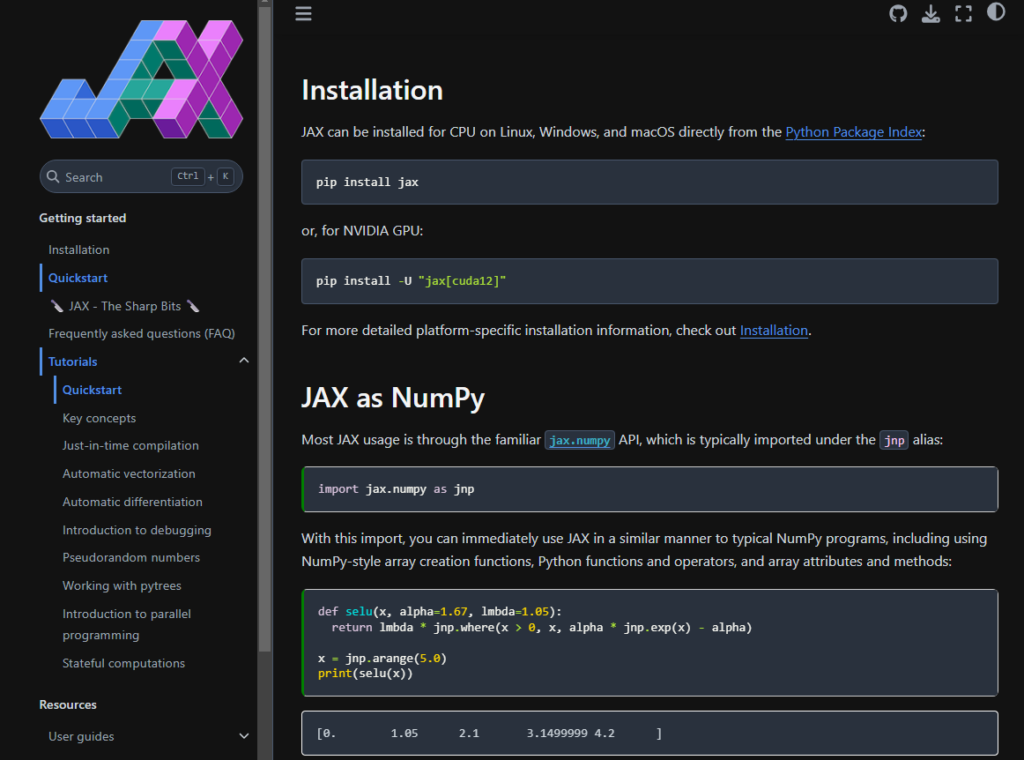

#4. JAX

JAX is a high-performance numerical computing library developed by Google, designed for machine learning research. It offers automatic differentiation and can compile and run code on CPUs, GPUs, and TPUs, making it ideal for complex AI models. JAX is compatible with NumPy, providing a familiar interface for Python users while delivering advanced capabilities for parallelization and vectorization, crucial for AI development.

Why you might like it:

- High-performance numerical computing with automatic differentiation.

- Ability to compile and run code on CPUs, GPUs, and TPUs for fast computation.

- Compatible with NumPy API, making it easy for Python users.

- Advanced support for parallelization and vectorization.

- Ideal for machine learning research, including training neural networks.

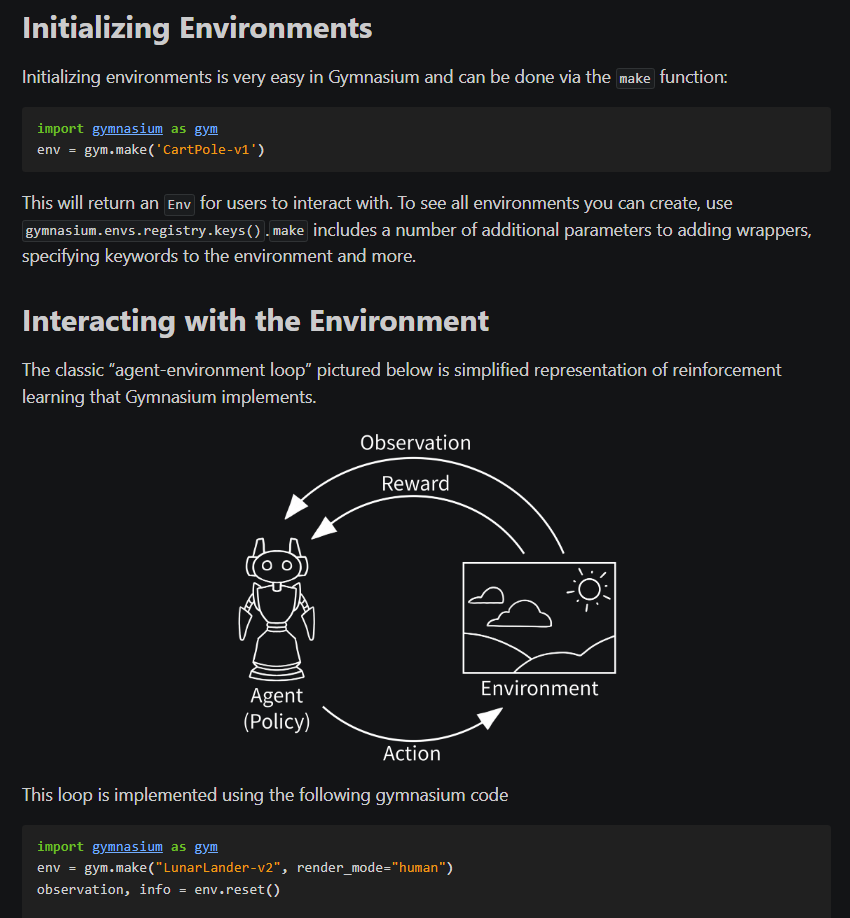

#5. Gymnasium

Gymnasium is an open-source toolkit designed for developing and comparing reinforcement learning algorithms. It provides a wide array of environments that range from simple tasks to complex simulations, enabling developers to test and refine their AI models. Gymnasium maintains compatibility with legacy Gym environments while introducing new environments and features to keep up with advancements in reinforcement learning. It is widely used in both academic research and industry, making it a valuable tool for anyone working with reinforcement learning on Ubuntu.

Why you might like it:

- Simple, pythonic API that is compatible with the original Gym environments.

- Supports a broad range of environments, including classic control tasks, robotic simulations, and custom environments.

- Improved support for vectorized environments and advanced wrappers for customizing the reinforcement learning process.

- Active maintenance and updates ensure continued relevance and integration of the latest developments in reinforcement learning.

Speaking of Ai and programming, don’t forget to check out our list of the best Linux courses on Udemy, Edureka and edX.

Why not also read our guide on the best VM software on Linux.